During Senate Estimates in December, I asked the eSafety Commissioner why social media platforms like X are being targeted, while Bluesky — a known hangout for the left — seems to be getting a free pass.

The Commissioner claimed there’s no “political bias” and that Bluesky has not been exempted – they’re just focusing on where the most kids are. She called Bluesky a “young company” that’s still finding its feet. It looks like a double standard to me — conservative platforms get targeted, while ‘left-wing hangouts’ get a free pass for being ‘low risk.’

Government shouldn’t be picking winners and losers based on politics. We need transparency, not a “dynamic list” that changes whenever a bureaucrat feels like it. Whether it’s the Labor Party or the Coalition, Australians are sick of the double standards and the “Big Brother” tactics.

I’ll keep speaking up to make sure your voice isn’t silenced by bureaucratic overreach. We need one rule for everyone, and total protection for our free speech.

— Senate Estimates | December 2025

Transcript

CHAIR: It wasn’t my intention.

Senator ROBERTS: No, I know that. Thank you for appearing again. I have, perhaps, an insight. Since COVID, people in Australia are very wary of government. That’s not just the Labor Party; that’s both. Commissioner, you have exempted Bluesky from your under-16 social media minimum-age restrictions, yet Bluesky is almost identical to X, as I understand it. It currently allows 13-year-olds or younger people saying they are 13 to sign up, and they have no age verification. Do you understand, Commissioner, that you have an obligation to discharge your duties without the perception of political bias? Your decision to exempt a left-wing hangout and to include a conservative hangout, X, looks like political bias.

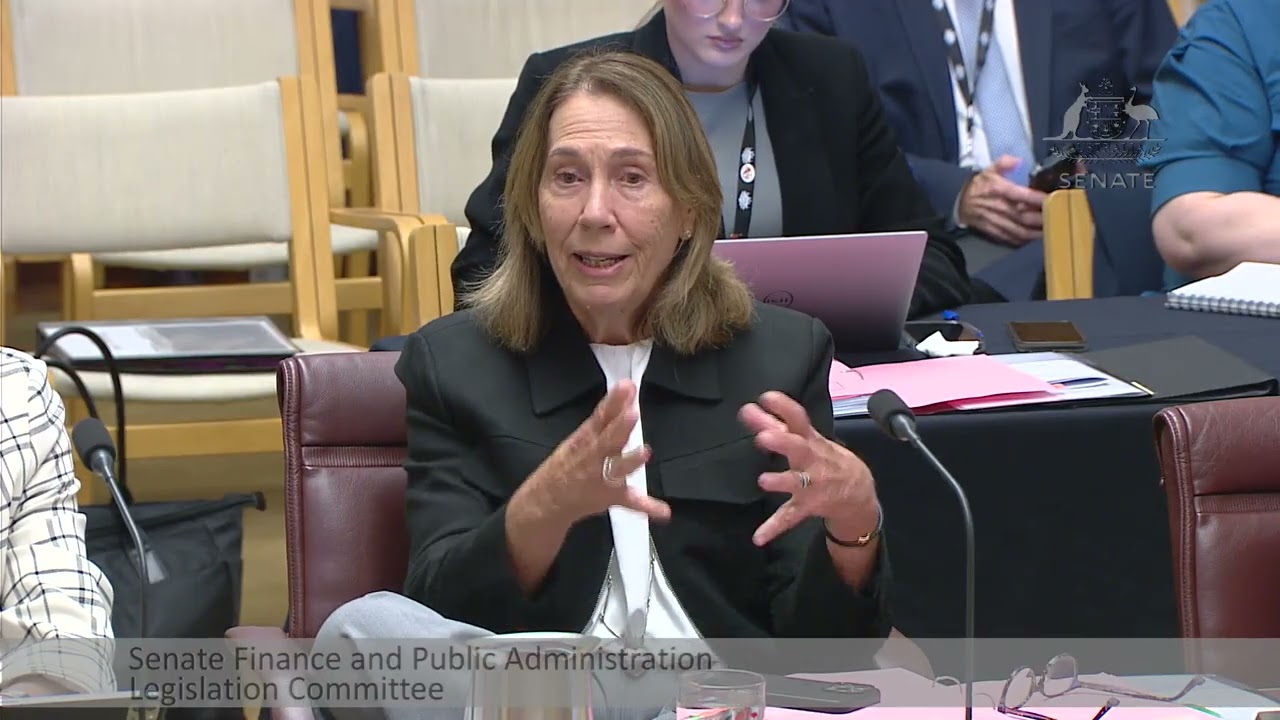

Ms Inman Grant: Bluesky has not been exempted. They present a very low risk. They have actually identified themselves as an age-restricted social media platform. They probably have 50,000 Australian users—a very small number of young users. They’re building up their age inference tools. They’re a very young company. What we’ve decided to do—we’re talking to a range of companies that could be age restricted social media platforms, whether it’s Yubo, Yope, Lemon8 or other ones that we know we’re going to go to. But you missed the opening statement, where I said our focus—these assessments that we’re doing are voluntary. I don’t have specific declaratory powers in terms of who is in and who is out, so I can’t say anyone is exempted. It’s up to the legal teams of those companies to determine whether they’re in or out. Where we will focus our compliance is where the vast majority of young people are. For the purposes of transparency, fairness and due process, we developed the self-assessment tools. Then we did some initial assessments so that we could at least have a body of major companies that would fit the criteria set forth by parliament. We’ve got 10 that we’re starting with, but I’ve always said that this will be a dynamic list. If we see that there are significant migratory patterns with young people that are going over to Bluesk —again, we’ve had three conversations with them—we expect that they will start applying some of their age assurance tools. They’re just at the beginning of that journey.

Senator ROBERTS: So what you’re saying, Commissioner, as I interpret it, is that you’ve got objective criteria that you assess platforms against.

Ms Inman Grant: We developed a self-assessment tool, so there are consistent assessment criteria. The criteria we have to use are the criteria that was in the legislation that parliament passed. That primary test is around whether or not a particular site—if it didn’t meet an exclusion, say, the messaging exclusion, the online gaming exclusion or the education and mental health exclusion, we had to do a sole-and-significant-purpose test. If its sole or significant purpose was online social interaction, then our preliminary view—it is not a determination—was that they were an age restricted social media platform.

Mr Fleming: Senator, just to give you a pointer, it’s in section 63C of the Online Safety Act. The criteria are set out in the act, and, if someone meets those criteria, there are a set of rules that the minister made. If the rules apply to that platform, then they’re out of the scheme. That’s how it works. To reinforce the point the commissioner made, there’s no determination that platforms are in or out. We’ve just expressed our preliminary view based on our assessments against the criteria, like the platforms can do for themselves. Then we focused on where most of the kids are, and that’s where we’re going to focus our initial efforts.

Senator ROBERTS: Thank you. Minister, your government chose to use legislation against social media platforms. However, the commissioner has then included search engines in the scope of age restrictions, using an industry code under the Online Safety Act. Couldn’t you have simply done the whole thing under existing powers and created an industry code of practice, mandatory if necessary, for age control of social media instead of this whole blunt instrument legislation—an industry code as opposed to enforcement?

Senator Green: I’ll let the eSafety Commissioner answer because there would have been advice given to government about the best way forward. This is a very important step forward that we’re taking, and legislation was required.

Senator ROBERTS: Well, I asked you because I’m not allowed to ask—

Senator Green: No, you are allowed to—

Senator ROBERTS: for an opinion of an officer.

Senator Green: No, it’s not an opinion.

Senator ROBERTS: If the minister wants you to, that’s fine.

Senator Green: You’re asking why legislation was required. They can answer that question.

Ms Inman Grant: The industry codes were included in the Online Safety Act of 2021 under the then coalition government. What they decided was that they would split the technology industry into eight different sectors, from search engines to social media sites to ISPs to some broader categories, including the designated internet services and relevant electronic services. What Paul Fletcher, who was my minister at the time, decided was that he wanted to continue the tradition of co-regulation that had existed for many years across telecommunications and ensure that the industry developed the codes. We would decide whether or not they met appropriate community safeguards. If they did, we’d register them. If they did not, then I would create standards, and that would be a disallowable instrument that would require additional parliamentary scrutiny. It took 4½ or five years for all this deliberation, for this to happen. In most other jurisdictions, the regulator writes the code, but, with respect to the search engine code that I think you’re referring to, I don’t know if you missed the interaction I just had with Charlotte Walker—

Senator ROBERTS: I did.

Ms Inman Grant: They were written by Google and Bing, and they pretty much codify safe search practices that are used today. So, come 27 December, if you’re searching the internet and you come across violent pornography or explicit violence, it will be blurred. This is because 40 per cent of kids tend to come across this kind of violent conflict. The search engine is the gateway, and it’s unexpected, it’s unsolicited and it’s in their face. If you’re an adult and you want to continue through, you can do that. You only have to be age verified if you decide to search the internet with a Google account on, for instance, and a lot of families may choose to have a Google account on so that they can have different age-inappropriate settings set up. But, if you’re concerned about it, you just use DuckDuckGo, Bing or whatever other one. The other thing that I think is really important about the search engine code is that, if there’s a person in distress who is seeking to take their life, rather than the search engine taking them directly to a lethal-method site, it will redirect them in the first instance to an Australian mental health support provider. We all know that suicide is a terribly damaging thing for families and communities. So, if we can give someone in distress the support that they need rather than the directions in terms of how to take their life, any family would be grateful.

Senator ROBERTS: I’m sure they would. X currently—

Senator Green: I’m sorry, Senator, I misunderstood your question at the beginning. I thought you were asking about the minimum age legislation, so I apologise.

Senator ROBERTS: That’s alright.

Senator Green: I understand now what you were asking, and the eSafety Commissioner has given a very good answer.

Senator ROBERTS: X currently has, in early deployment, routines which do the following: pattern matching to determine age without the use of personal identifiers, such as a digital ID; pin protected parental controls—I tend to think government should not be undermining parents—to allow parents to set guardrails for their children on content that will be granulated to individual accounts, keywords or topics; and interaction monitoring to identify what could be harassment based on the pattern of posting, the words used and the ages of the people involved to stop offending posts being seen by anyone but the poster. If industry can do this by themselves, why did we need legislation? Why wasn’t a simple code of practice used instead of this ‘big brother, big stick’ drama?

Ms Inman Grant: Is that a question? I would just say in response—

Senator ROBERTS: It looks like the platforms are developing new technology.

Ms Inman Grant: I would just say that we had a very constructive meeting with X. They walked us through a number of the tools. They did say they were going to use age assurance with Grok, which could have some interesting outcomes. But a large number of parents don’t utilise parental controls. Sometimes it’s because they’re too difficult for parents to find or to work. This was a bipartisan act that the parliament obviously started. The momentum started in South Australia and then in New South Wales. But my view, after talking to so many of the ministers, the Prime Minister and the opposition leader, who supported it, was that they wanted to do something monumental. They wanted to create a significant normative change.

Senator ROBERTS: That’s what scares us.

Ms Inman Grant: One normative change that isn’t scary, I would think, is that we know that 84 per cent of eight- to 12-year-olds already have social media accounts, and, in 90 per cent of cases, parents have helped them set them up. Why? Because they wanted them to be exposed to harm early? No. It’s because they’re concerned that their kids’ friends are all on the sites and their kid will be excluded. What this change does is delay them from being exposed to all the harmful and deceptive design features. They can also sit down with their kids and say: ‘Hey, you’re not ready for this. You’re not going to be on it and your friends shouldn’t be on it either.’ So it takes the FOMO, exclusionary element out of it, and this is what we’ll be measuring.

Senator ROBERTS: So the government excludes them instead of their friends? It should be the parents, shouldn’t it?

Ms Inman Grant: They’re setting a standard like you’d set a drinking age or the age for cigarettes. They’re setting an age for social media that they think is the right age and—

Senator ROBERTS: Let’s move on. Commissioner, the search engine code included a grace period of 12 months to allow search companies to write their code to comply. As I just indicated, social media companies are close to a technological solution that will also solve their compliance. Will you allow a grace period to allow social media companies to properly write, test and deploy age-verification technology in an orderly manner—in other words, delay?

Ms Inman Grant: We’re following the letter of the law, but what we’ve said is that we are looking for systemic failures. We don’t expect accounts to immediately disappear overnight. We also have another requirement beyond the deactivating of the under-16 accounts on 10 December, which is preventing under-16s from creating accounts. We accept that that’s going to be a longer-term journey for a lot of these companies, and many that we’re talking about here already have very sophisticated age-inference tools or AI tools. Some of them will be supplementing them with third-party tools that have been tested with the age assurance technical trial. Again, they’re taking a layered approach. We will watch closely. If they have glitches, we’ll talk to them about it. What we care about is that they’re clear with us about the tools and the success of validation or the layered approach they plan to take. If it’s not working, the other requirement is continued improvement, which the technology is doing every day. So in some ways we will be providing a grace process.

Senator ROBERTS: It seems that you accept that this rushed introduction with insufficient time for social media companies to get the software right, with no time for testing and very little public education, could be a recipe for chaos.

Ms Inman Grant: I think they’ve had plenty of time and they’re all technically capable of achieving this.

CHAIR: Senator Roberts, noting the time, we’re due to take a short break. Do you have a final question? Then we’ll take a break and rotate the call after that.

Senator ROBERTS: Why was the decision made to time the introduction for school holidays, which is when children will be wanting to access social media to stay in contact with their friends, sports and activities?

Ms Inman Grant: It was written into the legislation.

Senator ROBERTS: It was one of the reasons we opposed it.

Senator CANAVAN: It’s killed Christmas.

Ms Inman Grant: That’s a legitimate concern. Kids are—

Senator CANAVAN: They’ll get new gadgets that they won’t be able to use.

Ms Inman Grant: Only for gaming.

CHAIR: That’s a good note.